What is the problem? Please be detailed.

I'm a water resources engineer using ODK Collect and Aggregate for a citizen science project in Nepal called SmartPhones4Water. Our Aggregate URL is www.s4w-nepal.appspot.com

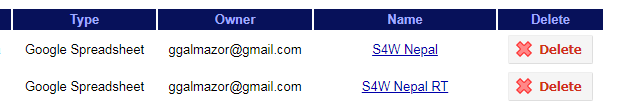

Roughly 1 week ago I noticed that our Google Sheet for our form S4W-Nepal (id: S4W-Nepal_v1.01) wasn't being updated with new data. When a signed into Aggregate and navigated to Form Management > Published Data and selected S4W-Nepal, there was a red warning next to the publisher in question. I restarted the publisher but it continued to go to a "paused", then something like "trying" and so on like it was in some sort of infinite loop.

Then I tried to delete the publisher and create a new publisher. The new publisher published the first 732 rows and has stopped completely (there are a total of 9000 records to be published).

Now, when I select S4W-Nepal from Form Management > Published Data, I get an error message that says:

s4w-nepal appspot com says:

Error: Problem persisting data or accessing data (Somehow DB entities for publisher got into problem state)

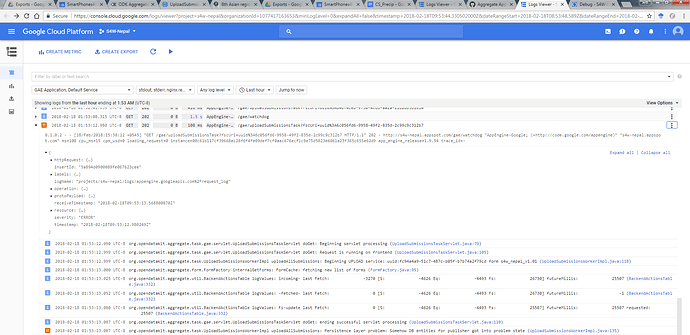

After spending some time on Google Cloud Platform looking at error logs, it appear that the issue is with UploadSubmissionsWorkerImpl.java:135: org.opendatakit.aggregate.task.UploadSubmissionsWorkerImpl uploadAllSubmissions: Persistence layer problem: Somehow DB entities for publisher got into problem state (UploadSubmissionsWorkerImpl.java:135)

What ODK tool and version are you using? And on what device and operating system version?

ODK Aggregate v1.4.15 running on the Google Cloud.

What steps can we take to reproduce the problem?

I can provide login credentials so you can see the cloud console and/or ODK Aggregate so you can see the problem first hand.

What you have you tried to fix the problem?

I tried to delete the problematic publisher and create a new one. I'm thinking about redeploying ODK Aggregate as a last stitch effort, but I'm sure you guys will have a better idea than that!

Anything else we should know or have? If you have a test form or screenshots or logs, attach here.

Click here to view a link to the log viewer.

Just in case the link to the log viewer doesn't work, here is a screenshot of what I think is the problem.